- Joined

- Aug 21, 2022

Starting a thread to talk about individual design choices to make our work far more resistant to Indian takeovers.

I have some personal overarching rules I go by that I'll share (I have more for specific use cases, loads), but just from heavy interactions with pajeets:

On this last bullet point, let me talk about something ancient and basic you learned long ago; structured data.

This is remarkably simple at first. (The complexity comes once you introduce multicore/multiuser later on, but it's not that big of a problem.)

In the initial design phase, you start out like this (pseudocode):

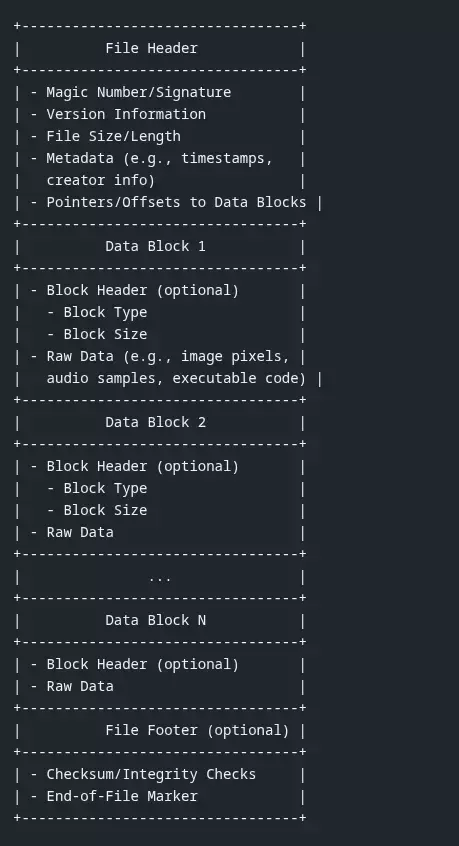

The picture you're about to create looks like this:

Instead of opening a file and reading a blast of bytes as a string, which you and every Indian knows how to do, you're going to be organized. Neat. Tidy. Contractual. So contractual, in fact, that you're always going to read and write bytes in a specific way, using a specific set of rules. YOUR set of rules. You write the contract. You know everything there is to know about this contract, because you're creating every aspect about it in code. This makes you the default World's Leading Expert for this storage format: your storage format. That you invented, not the Indian.

The Indian will now have to have expert methodical read-by-hand, code reviewing skills. Not an AI tool, you need to know how to do this by hand. Because one small change will destroy the integrity of the format. And Claude is not that great about preserving spec integrity unless it's been trained well in advance, which the Indian is never going to do. The Indian would still have to *know* the format well, and he doesn't want to do that work.

By the way, you also get to write the tooling for the format for integrity and data repair, which are now the world's leading authorities on validating the integrity of the dataset.

You might say: Didn't structured datasets go out the window a long time ago? Well, not if you're a protobuf user. And most Indians at this level just use code generators for protobuf, they have no fucking clue what any of the proxy client/server shit is doing. And the number of these guys that can crack open WireShark and read a protobuf stream is absolutely fucking zero.

But beyond using binary structures for the maximal optimum data transfer performance, you likely don't need shit like SQLite/MySQL or some shit Redis instance (just mount the fucking dataset in a RAM volume nbd, stop crying). Instead of reading struct-length by struct-length for your records, you likely want to store data of any size in a field, yeah?. That's easy enough to do if you move all your fixed-sized bytes to the top of the record, and then use sentinel bytes to highlight the any-sized data elements, and record the starting offsets as fields in your fixed-sized structure. Make a reader/writer that can read the data and write in both forward and reverse directions, and holy shit you now have your own database. You can continue stacking only the pieces you need on top of this, and still stay super fucking fast. Oh, while you're doing this, you'll now learn how to read hex dumps and you can get into the world of ROM dumping vintage Gameboy carts in your free time.

If all this sounds too crazy to you, then fuck off and go play with your NoSQL MongofuckDB whatever it is that the Indians are learning on PluralSight right now.

If you're still interested: guess what? Not one fucking human being in India is going to be able to handle this thing. You have now powerleveled yourself closer to the status of Unfireable.

I have some personal overarching rules I go by that I'll share (I have more for specific use cases, loads), but just from heavy interactions with pajeets:

- keep Java as a third Class citizen, and if you can get rid of it or cripple it, do it. Java is a pajeet language, followed by C#

- in SQL, triggers are fun. more fun than sprocs to debug. (but they're great for final data validation, cross-calculations, triggering downstream sprocs, etc). Indians get REALLY confused when you add

WITH(..)ASscratch tables andCURSORover them inside the trigger. Oldschool SQL development design books would tell you to make all your write and transaction entry points as sprocs, so as Indians have conquered much of the tech landscape, this is the pattern that they are used to seeing. Fuck that shit, I use journalizing tables for my writes and triggers take over the work of orchestrating the downstream effects. When they rollback, I leave a dead letter table for the dead soldiers, including very great detail about why the XACT exploded. Not one time have I released a datastore to some offshore Indian they didn't come running back for help because the triggers sent them for a loop. (I can't see why. The loop is self-describing.)

- whenever you are about to reach for a tool library for that "One Small Thing" it has, this is the crucial moment: would it make more sense if I wrote this natively myself?

On this last bullet point, let me talk about something ancient and basic you learned long ago; structured data.

This is remarkably simple at first. (The complexity comes once you introduce multicore/multiuser later on, but it's not that big of a problem.)

In the initial design phase, you start out like this (pseudocode):

Code:

// pseudo

struct FileRecord {

startByte byte[1]

versionCode byte[3]

... any more announcement fields...

startRecord *StructuredDataRecord

position long,

.... any more sentinel bytes before start of the data ...

Code:

sentinelBytes byte[6]

UnstructuredDataSet byte[...]

Code:

capBytes byte[10]

}The picture you're about to create looks like this:

Instead of opening a file and reading a blast of bytes as a string, which you and every Indian knows how to do, you're going to be organized. Neat. Tidy. Contractual. So contractual, in fact, that you're always going to read and write bytes in a specific way, using a specific set of rules. YOUR set of rules. You write the contract. You know everything there is to know about this contract, because you're creating every aspect about it in code. This makes you the default World's Leading Expert for this storage format: your storage format. That you invented, not the Indian.

The Indian will now have to have expert methodical read-by-hand, code reviewing skills. Not an AI tool, you need to know how to do this by hand. Because one small change will destroy the integrity of the format. And Claude is not that great about preserving spec integrity unless it's been trained well in advance, which the Indian is never going to do. The Indian would still have to *know* the format well, and he doesn't want to do that work.

By the way, you also get to write the tooling for the format for integrity and data repair, which are now the world's leading authorities on validating the integrity of the dataset.

You might say: Didn't structured datasets go out the window a long time ago? Well, not if you're a protobuf user. And most Indians at this level just use code generators for protobuf, they have no fucking clue what any of the proxy client/server shit is doing. And the number of these guys that can crack open WireShark and read a protobuf stream is absolutely fucking zero.

But beyond using binary structures for the maximal optimum data transfer performance, you likely don't need shit like SQLite/MySQL or some shit Redis instance (just mount the fucking dataset in a RAM volume nbd, stop crying). Instead of reading struct-length by struct-length for your records, you likely want to store data of any size in a field, yeah?. That's easy enough to do if you move all your fixed-sized bytes to the top of the record, and then use sentinel bytes to highlight the any-sized data elements, and record the starting offsets as fields in your fixed-sized structure. Make a reader/writer that can read the data and write in both forward and reverse directions, and holy shit you now have your own database. You can continue stacking only the pieces you need on top of this, and still stay super fucking fast. Oh, while you're doing this, you'll now learn how to read hex dumps and you can get into the world of ROM dumping vintage Gameboy carts in your free time.

If all this sounds too crazy to you, then fuck off and go play with your NoSQL MongofuckDB whatever it is that the Indians are learning on PluralSight right now.

If you're still interested: guess what? Not one fucking human being in India is going to be able to handle this thing. You have now powerleveled yourself closer to the status of Unfireable.