AI is a bubble that will be popped sooner than later. Here's a thread for all AI business news.

A Primer:

As the AI 'circle jerk' rages on, OpenAI, the company behind ChatGPT, will need to raise at least $207 billion more by 2030 to simply keep the lights on, according a new analysis by HSBC which takes into account recently disclosed megadeals with Microsoft, Amazon and Oracle.

Even with bullish assumptions that include 3 billion users, rapid subscription growth, and a giant slice of enterprise AI spending, the company's projected revenues are nowhere near its exploding bills for energy and chips, the bank says.

"OpenAI is a money pit with a website on top," according to FT's Bryce Elder, who notes that the bigger AI models get, the more cash they burn - and the winner in the LLM landscape may come down to who can continue raising money the longest.

Investor Fatigue: "If revenue growth doesn’t exceed expectations and prospective investors turn cautious, OpenAI would need to make some hard decisions." -FT

Debt-market jitters: Oracle’s recent bond volatility after its OpenAI deal shows how quickly sentiment can sour.

Competition: "OpenAI’s consumer market share slips to 56 per cent by 2030, from around 71 per cent this year. Anthropic and xAI are both given market shares in the single digits, a mystery “others” is assigned 22 per cent, and Google is excluded entirely." -FT

No AGI in the model: HSBC explicitly excludes any revenue or efficiency windfall from artificial general intelligence - an omission that could prove either prudent or massively conservative.

While HSBC provides a sobering view of OpenAI, they're actually very bullish on AI as a concept...

A Primer:

As the AI 'circle jerk' rages on, OpenAI, the company behind ChatGPT, will need to raise at least $207 billion more by 2030 to simply keep the lights on, according a new analysis by HSBC which takes into account recently disclosed megadeals with Microsoft, Amazon and Oracle.

Even with bullish assumptions that include 3 billion users, rapid subscription growth, and a giant slice of enterprise AI spending, the company's projected revenues are nowhere near its exploding bills for energy and chips, the bank says.

"OpenAI is a money pit with a website on top," according to FT's Bryce Elder, who notes that the bigger AI models get, the more cash they burn - and the winner in the LLM landscape may come down to who can continue raising money the longest.

The Math Behind the $207 Billion Hole

HSBC’s model runs through 2030 and arrives at these headline numbers:- Cumulative data-center rental costs (2025-2030): $792 billion - rising to $1.4 trillion by 2033!

- Projected cumulative free cash flow: $282 billion

- Additional liquidity from Nvidia/AMD deals, undrawn facilities and cash on hand: ~$68 billion

- Net funding shortfall: $207 billion (plus a $10 billion buffer)

- Total users reach 3 billion by 2030 (44% of global adults outside China), up from ~800 million today

- Paid-subscriber conversion rises from ~5% today to 10% by 2030

- Consumer AI market generates $129 billion annually by 2030 ($87 billion from search, $24 billion from advertising)

- Enterprise AI market hits $386 billion; OpenAI’s share slips from ~50% today to 37%

- Resulting 2030 revenue run-rate: roughly $174 billion (in line with CEO Sam Altman’s public hints of $100 billion by 2027 and continued hypergrowth)

Biggest Challenges To Come

According to the report, there are several pressure points that could worsen this outlook, possibly forcing drastic action...Investor Fatigue: "If revenue growth doesn’t exceed expectations and prospective investors turn cautious, OpenAI would need to make some hard decisions." -FT

Debt-market jitters: Oracle’s recent bond volatility after its OpenAI deal shows how quickly sentiment can sour.

Contract lock-in: With most cloud deals running for 4-5 years and containing stiff penalties for early exits, OpenAI has little wiggle room.

Competition: "OpenAI’s consumer market share slips to 56 per cent by 2030, from around 71 per cent this year. Anthropic and xAI are both given market shares in the single digits, a mystery “others” is assigned 22 per cent, and Google is excluded entirely." -FT

No AGI in the model: HSBC explicitly excludes any revenue or efficiency windfall from artificial general intelligence - an omission that could prove either prudent or massively conservative.

While HSBC provides a sobering view of OpenAI, they're actually very bullish on AI as a concept...

We expect AI to penetrate every production process and every vertical, with a great potential for productivity gains at a global level. [ . . . ]

Some AI assets may be overvalued, some may be undervalued too. But eventually, a few incremental basis points of economic growth (productivity-driven) on a USD110trn+ world GDP could dwarf what is often seen as unreasonable capex spending at present.

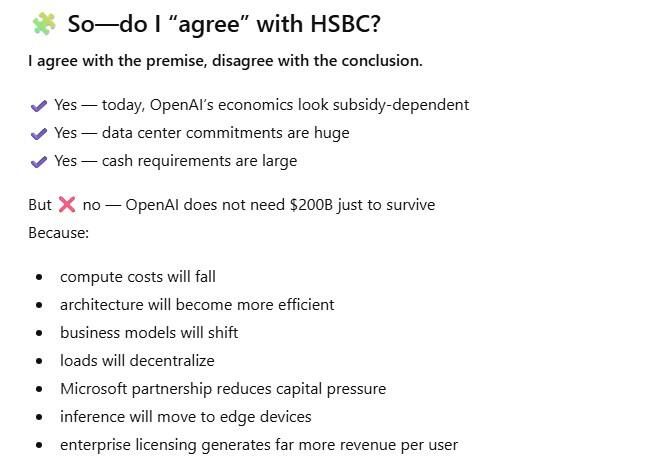

GPT COUNTERS!

For shits and giggles we asked ChatGPT if it thought HSBC was correct in their analysis. While the LLM mostly agreed, it said that the bank ignored;- architectural efficiency improvements

- distillation

- sparse expert models

- on-device inference

- agent-delegated execution

- reinforcement-learning-optimized efficiency

- quantization

- open-weight local models replacing cloud calls

Last edited: